DemoFusion: Democratising High-Resolution Image Generation With No $$$

- PRIS, Beijing University of Posts and Telecommunications1

- Tsinghua University2

- University of Edinburgh3

- SketchX, University of Surrey4

Abstract

High-resolution image generation with Generative Artificial Intelligence (GenAI) has immense potential but, due to the enormous capital investment required for training, it is increasingly centralised to a few large corporations, and hidden behind paywalls. This paper aims to democratise high-resolution GenAI by advancing the frontier of high-resolution generation while remaining accessible to a broad audience. We demonstrate that existing Latent Diffusion Models (LDMs) possess untapped potential for higher-resolution image generation. Our novel DemoFusion framework seamlessly extends open-source GenAI models, employing Progressive Upscaling, Skip Residual, and Dilated Sampling mechanisms to achieve higher-resolution image generation. The progressive nature of DemoFusion requires more passes, but the intermediate results can serve as "previews", facilitating rapid prompt iteration.

Methodology

The framework of DemoFusion. (a) Starting with conventional resolution generation, DemoFusion engages an "upsample-diffuse-denoise" loop, taking the low-resolution generated results as the initialization for the higher resolution through noise inversion. Within the "upsample-diffuse-denoise" loop, a noise-inverted representation from the corresponding time-step in the preceding diffusion process serves as skip-residual as global guidance. (b) To improve the local denoising paths of MultiDiffusion, we introduce dilated sampling to establish global denoising paths, promoting more globally coherent content generation.

SDXL vs. DemoFusion

SDXL[1] can synthesis images up to a resolution of 1024×1024, while DemoFusion allows SDXL to generate images at 4×, 16×, and even higher resolutions without any tuning and substantial memory demands. All generated images are produced using a single RTX 3090 GPU.

SDXL+SR vs. DemoFusion

While the SR (Super-Resolution) model can enhance low-resolution images to appear sharper and clearer, it falls short in providing the intricate local details inherent to native high-resolution images. This distinction underscores the fundamental difference between high-resolution generation and super-resolution, highlighting why super-resolution cannot serve as a substitute for genuine high-resolution image generation. Here, we employ BSRGAN[2] for super-resolution. To better illustrate the local details, we present results at a 200% zoom-in for images with a resolution of 4096×4096.

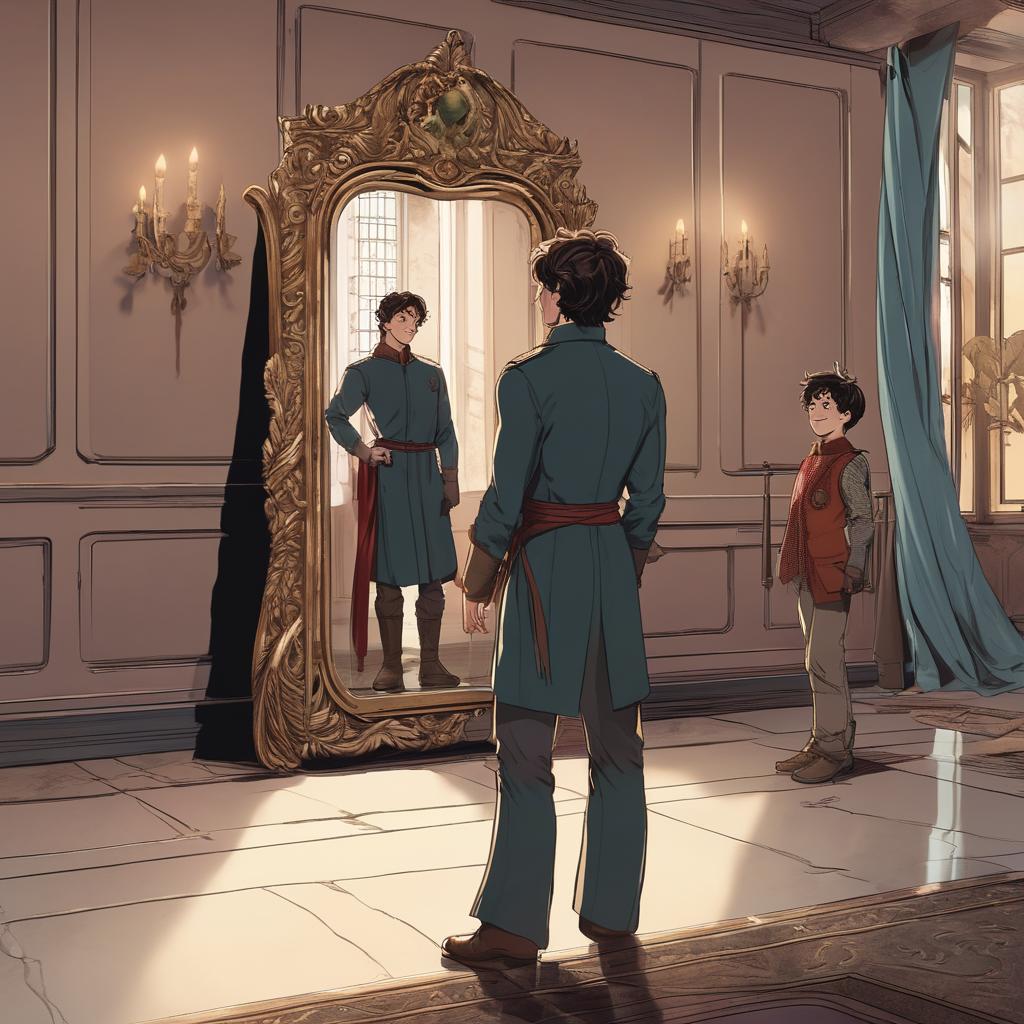

Combining with ControlNet

The tuning-free characteristic of DemoFusion enables seamless integration with many LDM-based applications. E.g., DemoFusion combined with ControlNet[3] can achieve controllable high-resolution generation.

Upscaling Real Images

Since DemoFusion works in a progressive manner, we can replace the output of phase #1 with encoded real image representations, thereby achieving upscaling of real images. However, we carefully avoid using the term "super resolution", as the outputs tend to lean towards the latent data distribution of the base LDM, making this process more akin to image generation based on a real image.

Citation

If you find this paper useful in your research, please consider citing:

@inproceedings{du2024demofusion,

title={DemoFusion: Democratising High-Resolution Image Generation With No \$\$\$},

author={Du, Ruoyi and Chang, Dongliang and Hospedales, Timothy and Song, Yi-Zhe and Ma, Zhanyu},

booktitle={CVPR},

year={2024}

}

Reference

- Podell, Dustin, et al. "Sdxl: improving latent diffusion models for high-resolution image synthesis." arXiv preprint arXiv:2307.01952. 2023.

- Zhang, Kai, et al. "Designing a practical degradation model for deep blind image super-resolution." Proceedings of the IEEE/CVF International Conference on Computer Vision. 2021.

- Zhang, Lvmin, et al. "Adding conditional control to text-to-image diffusion models." Proceedings of the IEEE/CVF International Conference on Computer Vision. 2023.

Acknowledgements

The work is done while Ruoyi Du visiting the People-Centred AI Institute at the University of Surrey.